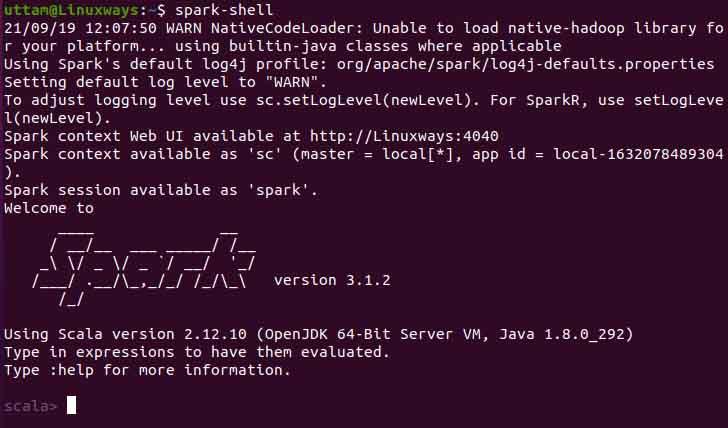

Type "help", "copyright", "credits" or "license" for more information.Ģ0/09/09 22:52:46 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform. It can change or be removed between minor releases. 3.2: Spark pre-built for Apache Hadoop 3.2 and later (default) Note that this installation way of PySpark with/without a specific Hadoop version is experimental. 2.7: Spark pre-built for Apache Hadoop 2.7. Python 3.6.9 (default, Jul 17 2020, 12:50:27) without: Spark pre-built with user-provided Apache Hadoop. Type in expressions to have them evaluated. Using Scala version 2.12.10 (OpenJDK 64-Bit Server VM, Java 11.0.8) For now, we use a pre-built distribution which already contains a common set of Hadoop dependencies.

#Apt install apache spark download

We could build it from the original source code, or download a distribution configured for different versions of Apache Hadoop. There are several options available for installing Spark. Spark context available as 'sc' (master = local, app id = local-1599706095232). Installing Apache Spark Downloading Spark. To adjust logging level use sc.setLogLevel(newLevel). Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties using builtin-java classes where applicable WARNING: All illegal access operations will be denied in a future releaseĢ0/09/09 22:48:09 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform. Hence, here we are downloading the same, in case it is different when you are performing the Spark installation on your Ubuntu system, go for that.

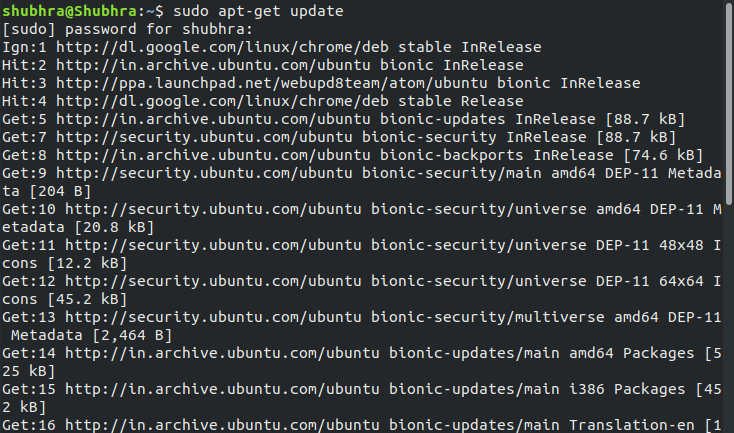

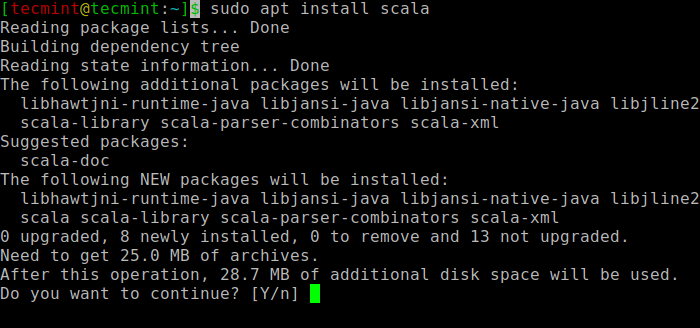

However, while writing this tutorial the latest version was 3.1.2. The Spark standalone mode sets the system without any existing cluster management software.For example Yarn Resource Manager / Mesos. Now, visit the Spark official website and download the latest available version of it. copy the link from one of the mirror site. Download Apache Spark using the following command. As of the writing of this article, version 3.0.1 is the newest release. The Mirrors with the latest Apache Spark version can be found here on the Apache Spark download page. In order to install Apache Spark on Linux based Ubuntu, access Apache Spark Download site and go to the Download Apache Spark section and click on the link from point 3, this takes you to the page with mirror URL’s to download. The next step is to download Apache Spark to the server. WARNING: Use -illegal-access=warn to enable warnings of further illegal reflective access operations This tutorial contains steps for Apache Spark Installation in Standalone Mode on Ubuntu. sudo apt install python3 Apache Spark Installation on Ubuntu.

WARNING: Please consider reporting this to the maintainers of .Platform rootubuntu1804: apt install default-jdk -y Download Apache Spark The next step is to download Apache Spark to the server.

So Java must be installed in your system. Apache Spark is a Java-based application. To verify this, run the following command. Once all the packages are updated, you can proceed to the next step. WARNING: Illegal reflective access by .Platform (file:/opt/spark/jars/spark-unsafe_2.12-3.0.1.jar) to constructor (long,int) Because Java is required to run Apache Spark, we must ensure that Java is installed. WARNING: An illegal reflective access operation has occurred